What if the AI future being sold to markets rests on claims that cannot survive scrutiny? From superintelligence to mass job loss, the loudest promises around generative AI begin to look less like foresight than hype dressed up as inevitability.

Frontier generative Artificial Intelligence (AI) companies are spending billions and billions of dollars on developing, training and running their algorithms, supposedly in pursuit of some notion of ‘superintelligence’. To sustain the hype and keep financial investors enthralled, their CEOs are ‘flooding the zone’ with a deluge of unfalsifiable predictions, untethered from evidence, ranging from mass technological unemployment to Yudkowskian fears of human extinction, or, alternatively, of utopian vistas of a cornucopia of riches, unprecedented scientific progress and exponential growth in productivity created by ubiquitous automation.

In a new INET Working Paper, I attempt to empirically scrutinize the most prominent — but also most ludicrous — predictions made by Silicon Valley’s CEO’s. To keep sane in the face of the tsunami of unhinged prognostications, I rely on Bertrand Russell’s (1952) Tea Pot Analogy. Russell argued that the burden of proof lies on those making unfalsifiable claims, rather than on others to disprove them: “If I were to suggest that between the Earth and Mars there is a china teapot revolving about the sun in an elliptical orbit, nobody would be able to disprove my assertion provided I were careful to add that the teapot is too small to be revealed even by our most powerful telescopes. But if I were to go on to say that, since my assertion cannot be disproved, it is intolerable presumption on the part of human reason to doubt it, I should rightly be thought to be talking nonsense.”

It is not possible to do justice to the myriad predictions about the economic impact of AI reviewed in the full paper. Hence, instead, this blogpost briefly evaluates eight widely debated predictions. I hope that it will persuade readers to actually read the paper for the more comprehensive evaluations. To be clear, the focus throughout is on generative AI, as represented by the subset of commercial closed-source Large Language Models (LLMs) — and not on smaller domain-specific AI tools or alternative machine-learning approaches.

Claim #1: AI ‘superintelligence’ is just around the corner

All tech CEOs sing from the same song sheet, promising outlandish visions of imminent ‘superintelligence’ bringing a golden era of radical abundance and scientific progress in which super-smart generative AI will solve and sort humanity’s biggest problems. On precisely this claim the whole AI overinvestment cycle is founded. According to Elon Musk (in December 2025), AGI could already arrive in 2026; OpenAI’s Sam Altman agrees; Anthropic’s Dario Amodei, Google’s Ray Kurzweil and Nvidia’s Jensen Huang think we will get there only around 2029; and Microsoft’s Mustafa Suleyman, Eric Schmidt and Google’s Sergey Brin predict an ‘intelligence explosion’ around 2030. It does not matter if these predictions go wrong: Artificial General Intelligence (AGI) remains permanently ‘around the corner’.

Teapot Alert #1: AI ‘superintelligence’ is not here. Nor is it around the corner

Predictions that AGI is imminent invoke a strong sense of déjà vu. Most of us have lost count of how many times Silicon Valley leaders promised the imminent arrival of autonomous vehicles, quantum computing, augmented reality, and humanoid robots — and it is common knowledge that Silicon Valley’s promised timelines reflect recurring fundraising needs rather than technical realities. However, the claim that AGI is imminent is wrong, not because of exaggerated timelines, but more structurally, because the scaling approach to generative AI is incapable of generating ‘intelligence’, leave alone AGI.

Large language models (LLMs) are not intelligent, cannot causally reason, have no ‘conceptual model of the world’ and are not sentient, but rather are predictive pattern matchers and powerful retrieval and plagiarism machines. Leading models (GPT-5, Claude Opus 4.6, Gemini 3.1 Pro) ‘hallucinate’ on approximately 3-8% of general factual ‘closed-world’ questions in controlled evaluations that overlap with the training data, but ‘hallucination’ rates increase significantly in out-of-distribution data — for complex reasoning tasks, error rates can be as high as 30-50%; the ‘hallucination’ problem of LLMs cannot be solved by scaling; it is a structural property of the LLMs.

Critical (cognitive) scientists including Gary Marcus, Emily M. Bender, Alex Hanna, Timnit Gebru, Iris van Rooij, Olivia Guest, Erik J. Larson, Melanie Mitchell and many others have been arguing this forever. They are now joined by prominent industry insiders such as Yann LeCun (formerly of Meta AI), Ilya Sutskever (formerly of OpenAI), and Google DeepMind CEO Demis Hassabis. These industry insiders have given up on Silicon Valley’s strategy to reach ‘superintelligence’ by making the LLMs bigger and faster, because it does not and cannot be made to work. Today’s LLMs may be good at pattern matching and recognition, albeit at tremendous economic and environmental cost and always with ‘hallucinations’ included, but they do not understand causality and have no working ‘world model’. The generative AI industry’s scaling path to ‘superintelligent LLMs’ has reached a dead end.

Claim #2: The AI boom is not a bubble

Big Tech leaders are in unanimous agreement: the stratospheric AI data-center investments are building the (computing) infrastructure to enable a future of abundance. The computing infrastructure will host the agentic machine-learning algorithms that (in their song book) will change business models, transform the world of work, accelerate science and level up labor productivity and contribute to faster economic growth. These colossal investments will pay off — the ‘fundamentals’ of the AI industry are sound. Don’t worry too much about the circular vendor deals between Nvidia, Oracle, the AI labs, the neocloud companies and the Big Tech platform firms — this is certainly not a house of cards! There’s also no need to be concerned about the slow-motion run on ‘shadow’ private-credit funds that are heavily involved in software firms and the AI-data-center ecosystem. What could possibly go wrong, given how transformative the LLMs are going to be?

Teapot Alert #2: Sorry, but the AI boom is a bubble

Many things could go wrong, in fact, particularly when the LLMs turn out to be less transformative than in the hype. Added to this, the U.S. economy is facing stagflationary headwinds, mostly due to the shock to energy prices caused by Trump’s Iran war and the closure of the Strait of Hormuz; interest rates will likely stay up and may even increase, while recession fears are quickly growing. The closure of the Strait of Hormuz is disrupting vital global supply chains of computer chips and components essential to the U.S. AI industry — and also squeezing the indispensable funding flows coming from the Gulf sovereign wealth funds. The AI labs face further funding stresses, because private credit is drying up. The private-credit funds that are heavily exposed to AI data centers and software firms are in trouble, struggling to stop a run on their funds, as clients are racing to get out of the door. “It sort of smells like [a crisis] again,” said Lloyd Blankfein, the former CEO of Goldman Sachs. “I don’t feel the storm, but the horses are starting to whinny in the corral,” ominously adding that “We’re getting close to the end of late stages of cycles on this — and we’re due for a kind of a reckoning.”

The biggest reason why the AI overinvestment cycle is not sustainable, however, is that none of the AI firms has a feasible path to profitability. The problem is simple, but unsolvable. The AI labs face escalating training costs, growing inference costs and additional costs from legal challenges. They urgently need revenue growth. To attract users and gain market share, the AI labs have been offering LLM services for free or at fixed monthly rates — for, say, $20/month for non-professional users or $200/month for coders and other professional users. These fees do not even cover the operational (inference) cost, because users have an incentive to make maximum use of the AI models given the flat fees they are paying. This is a major reason why the LLM labs continue to make losses. The AI firms cannot simply raise their subscription fees, because they are experiencing strong (price) competition from their Chinese rivals which offer roughly similar performance but at lower cost. Instead, the AI firms are resorting to introducing rate limits on LLM usage during peak hours.

The alternative to charging a flat fee is to charge the actual token cost (or the API-equivalent user cost) to users. However, in such a metered system, users will be forced to buy an LLM service without knowing its price; the reason is that there is no way to anticipate how many tokens a particular prompt will burn. Every time the LLM makes a mistake or chases its own tail for a few minutes, using ever more tokens, the user has to pay — and this will disincentivize the use of the LLM. Most users, especially coders, are completely unprepared for the cost of paying their actual token consumption. A metered system is therefore unlikely to solve the problem and the AI labs remain stuck in a non-viable business model.

Claim #3: AI will lead to a white-collar jobs apocalypse

Agentic AI will lead to a ‘white-collar bloodbath’ (Dario Amodei); AI agents can replace every white-collar job in 18 months (Microsoft’s AI chief executive Mustafa Suleyman); entire job categories could be wiped out by AI (Sam Altman); 20% to 50% of the 70 million office workers in the United States will lose their jobs, in a “great disemboweling” of office workers (Andrew Yang); AI will lead to an ‘apocalypse’ for highly educated, humanities-trained, often female, white-collar professionals belonging to the Democratic-Party-leaning diploma class, as AI-tools are stripping them of their economic power and transferring that power to working-class, right-leaning men (Palantir CEO Alex Karp).

Teapot Alert #3: AI will not destroy most white-collar jobs but reshape them

The purpose of these bizarre predictions is to put the fear of AI into workers, while signaling the unprecedented transformative power of LLMs to financial investors. But the predictions of an ‘AI job apocalypse’ trigger an overwhelming sense of déjà vu. Not long ago, Carl Benedikt Frey and Michael Osborne (2013) concluded that nearly 47% of U.S. jobs featured more than 70% probability of “potentially [being] automatable over some unspecified number of years, perhaps a decade or two.” However, analysis of detailed data on employment by more than 600 occupations (during 2013-2024) shows that the predictions of Frey and Osborne about blue-collar job destruction have turned out to be well off the mark.

Why would the AI era be different? Indeed, most U.S. occupations are sitting comfortably — unthreatened by the AI revolution. New data on AI-exposure by occupation from Anthropic suggest that the tasks associated with around 40% of U.S. occupations do not appear in AI usage data at any meaningful level. AI-exposure is low-to-moderate in another 29% of occupations (performing up to 15% of the tasks associated with these jobs). Combined, more than two-thirds of U.S. occupations are, for now, fully unaffected by or only moderately exposed to the new technology.

A closer look at the one-third of U.S. occupations with high AI-exposure does suggest that AI-tools will not replace most jobs but reshape them. Anthropic’s AI-exposure metric has no predictive power concerning expected job growth by occupations, based on the BLS-projections of job growth during the coming decade. To illustrate, not a single radiologist lost her job because of AI. Employment for structural biologists has, so far, not declined due to AI-protein folding. What happened is that structural biologists now spend less time on determining protein structures and more time on interpreting, validating and using them. In fact, AI tools (like AlphaFold) created new jobs for computational structural biologists, bio-informatics experts, cryo-electron microscopists, and pharma biologists.

Claim #4: AI will boost labor productivity

This claim is a corollary to claim #3. The prophesied job destruction caused by AI investments assumes that the usage of agentic AI tools will significantly raise labor productivity, if one is willing to believe the hyperbole that this automation wave will kick 20% or more of the 70 million office workers in the U.S. to the curb. Erik Brynjolfsson and Jason Furman are on the record, claiming that AI is already making a measurable impact on U.S. non-farm business sector labor productivity.

Teapot Alert #4: The net impact of AI on productivity will likely be small

Anthropic’s AI-exposure metric shows that two-thirds of U.S. occupations will remain fully or largely unaffected by the new machine-learning tools. This means that the productivity impacts of AI will be concentrated in one-third of U.S. occupations. It is, therefore, to be expected that the AI era will not soon lead to a massive increase in aggregate productivity growth. Most economists agree on this point. A recent survey of 69 economists, 27 AI industry professionals, and 25 AI policy professionals concludes that most unconditional economic forecasts of productivity growth during the coming AI era (2025-2035) are rather close to historical trends. The economists in this survey predict a modest increase in U.S. productivity growth of around 0.2 percentage points in 2030. This is similar to the estimate by Alexander Arnon (2025), writing for the Penn Wharton Budget Model, but more than twice as large as the deliberately conservative (and reasonable) estimate by Daron Acemoglu (2025).

The estimated aggregate productivity impacts of AI are profoundly disheartening. Based on Arnon’s estimate, it can be calculated that AI will add a cumulative total of $2.9 trillion (in constant 2017 dollars) to the baseline C.B.O. growth path of U.S. economy during 2025-2035. The extra trillions of dollars are generated by a projected cumulative investment of $4.5 trillion (in constant 2017 dollars) by the AI industry during 2025-2035.

Let these numbers sink in. The macroeconomic return to the gigantic AI-capital expenditures will most likely be negative over the next decade. The macroeconomic returns to AI capital expenditure could even be smaller, because the percentage net cost savings from AI may well be lower than is generally assumed. The point is this: the use of AI tools not only generates benefits but also costs — and many of these costs are externalized as collateral damage and lower productivity.

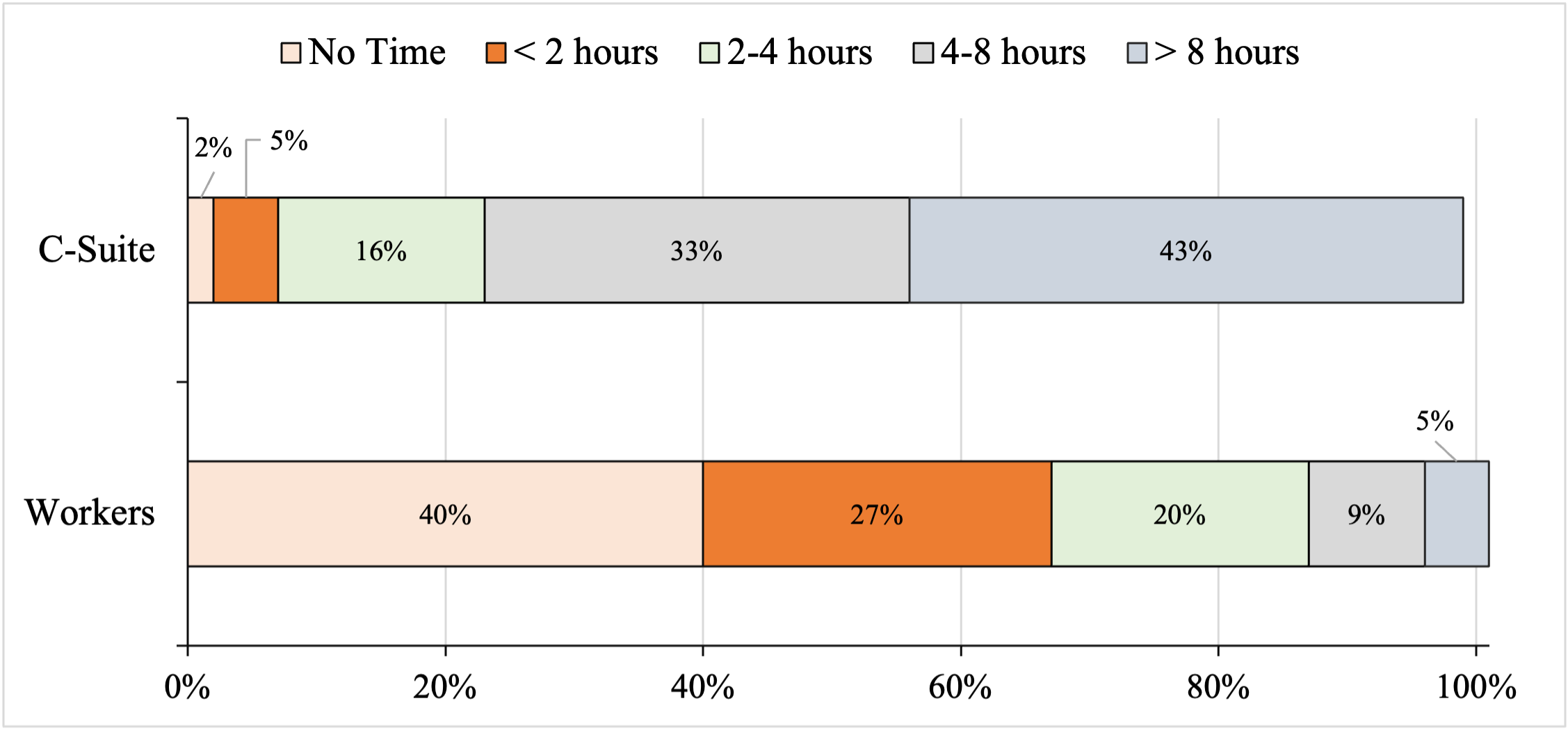

For one, AI-generated ‘work-slop’ is hurting worker motivation and productivity. The C-Suite thinks AI is a tool for enhancing business productivity, but employees tell a very different story. The Wall Street Journal reported on findings by research firm Section, which surveyed 5,000 white-collar workers from companies with more than 1,000 employees (Figure 1). More than 70% of the corporate executives in the survey said they were “excited” by AI, and 43% of them said the tools have saved them more than 8 hours of work per week. But non-management workers had a very different take on AI. Almost 70% of this group said AI made them feel “anxious or overwhelmed,” and 40% said the tools saved them no time at all; 27% of non-supervisory workers saved less than 2 hours each week using AI-tools. Many employees report that any time saved by using AI is offset by the need to correct AI output or work through confusion and rework. 4 out of 10 employees would be fine never using AI again. Dealing with work-slop is demotivating and drains worker productivity. It also breeds distrust within the organization — colleagues use AI to produce a report and you have to work overtime to correct the mistakes, fill in the gaps, and remove the non-existent references.

Figure 1 How Much Time Do You Think You Save Each Week by Using AI (Percentages) Source: Ellis (2026). Note: Totals may not add up to 100% because of rounding

A survey by software company WalkMe of 3750 executives and employees finds that, even though 81% of executives think their AI tools have “significantly improved productivity,” their workers are actually wasting eight hours per week cleaning up AI-slop, which is the equivalent of 51 work days a year. AI is not delivering.

AI is creating many other collateral damages. AI-slop is drowning U.S. courts, causing an enormous waste of time and money. AI web crawlers are mining the web for ever more content to feed into their LLM mills, aggressively hammering public-interest websites with traffic spikes that can reach up to ten or even twenty times normal levels within minutes, causing performance degradation, service disruption, and increasing operational costs. Reliance on generative AI-tools is found to lead to an erosion of analytical reasoning and critical thinking and a decline in competence maintenance and output assessment. In healthcare, AI dependence has been found to diminish diagnostic reasoning, clinical judgment, lower retention of tacit knowledge and weakened ethical sensitivity and moral judgment (Ferdman 2025). Science is drowning in AI slop. AI tools themselves are creating new cybersecurity threats that may hurt the bottom lines of firms.

The use of AI code assistants compromises the quality of the code. GitClear analyzed 211 million lines of code across major tech companies and found 4x growth in copy-paste code, which means that AI tools are duplicating logic instead of generating new logic; a decline in code longevity; and refactoring, the practice that is essential for the long-term maintainability of the code, is reported to have declined. A recent study tested 18 AI coding agents on maintaining 100 real codebases, spanning 233 days each. AI agents can produce code that passes benchmark tests on one-time static software engineering tasks such as static bug fixing, but 75% of the tested models including Cursor, Claude Code and Devin break previously working code during long-term maintenance tasks. AI-generated code favors short-term copy-pasted solutions over long-term design and ignores architectural consistency. The result is software that works today but becomes painful to change tomorrow.

Perhaps the most dangerous outcome is that companies reduced entry-level hiring dramatically, believing that AI could handle junior tasks. But junior developers don’t just write code, they grow into future senior engineers. When that talent pipeline breaks, the entire software industry will feel it. There will be a senior developer shortage in about five to seven years that is going to be genuinely catastrophic. And it is entirely self-inflicted, because it is not AI tools that are replacing junior engineers, but bad business leadership.

Claim #5: AI is already destroying jobs in industries with a high AI-exposure

New research by Goldman Sachs economists claims to find that AI is already a measurable drag on the U.S. job market — erasing roughly 16,000 net jobs per month over the past year, with the pain falling hardest on Gen Z and entry-level workers. Software development postings are down sharply from their peak and remain well below pre-pandemic levels. Employment has declined since late 2022 in the 10% of occupations most exposed to AI, even if total U.S. employment increased. The decline in employment in AI-exposed sectors is particularly pronounced for those under age 25. The decline in employment for those under 25 is not due to layoffs, but to a low job hiring rate for young workers entering the labor force.

Teapot Alert #5: One swallow does not make a summer

It is too early to exclusively blame AI for the low hiring rate for recent college graduates and other entry-level workers. Macroeconomic context matters. In March 2020, the Fed cut interest rates to nearly zero and held them there for two years. There was no cost to borrow money, and companies spent accordingly. Big tech, as a whole, hired employees by the millions. This period was followed by the fastest monetary tightening cycle in 40 years, which raised the cost of capital for firms. The low hiring rates and layoffs in the tech industry thus represent a “rolling back of over-hiring that happened in the past five years” (in response to monetary tightening) rather than AI displacement.

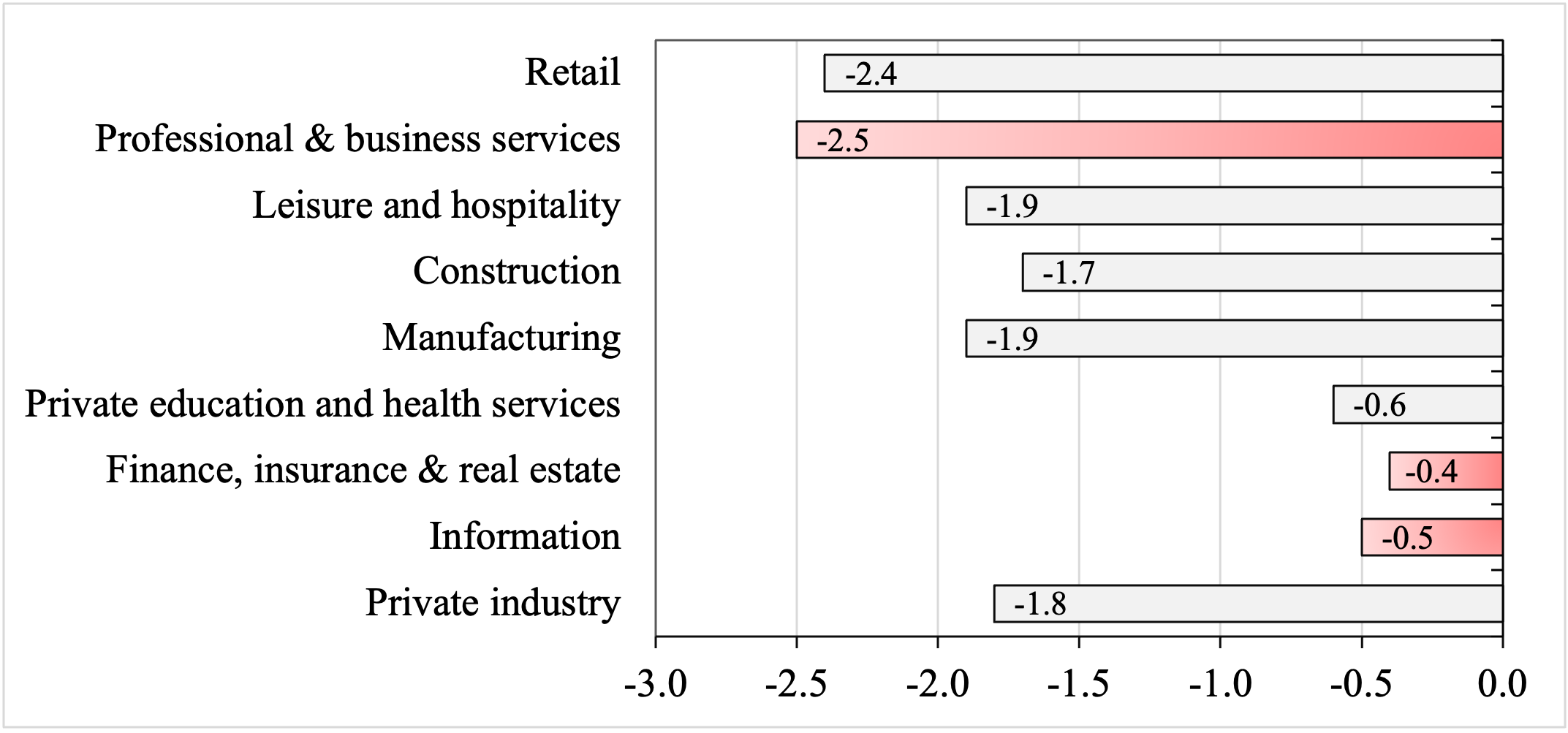

Private-sector hiring rates that peaked in November 2021, have declined in all U.S. industries (Figure 2), not just in the AI-exposed ones (e.g., ‘Information’, ‘Finance, insurance and real estate’, and ‘Professional and business services’). Nearly 60% of U.S. hiring managers surveyed by Resume.org (2026) said that they emphasize AI’s role in reducing hiring or cutting jobs, because this is viewed more favorably than emphasizing the true reason (i.e., financial constraints). A March 2026 Federal Reserve study analyzed data from more than a million firms and found no evidence that reduced job postings are due to AI adoption. Even Sam Altman called out the corporate ‘AI washing’ to mask layoffs for financial reasons.

Figure 2 Hiring Rates in Major Industries (Percent Change during November 2021-February 2026 Source: FRED database, based on BLS data from the Job Opening and Labor Turnover Survey (JOLTS). Notes: The hiring rate is defined as the number of newly hired workers as a percentage of already employed workers in a month. The red bars indicate industries with the highest AI-exposure.

Claim #6: AI will mostly destroy bullshit jobs and create better ones

One in five workers in the United States says their jobs don’t make meaningful contributions to the world. These are generally office jobs, especially managerial (assistant) positions, the status of which is often defined by how many people report to these managers rather than the actual work done. The late David Graeber called these white-collar jobs that even people who perform them think are meaningless, ‘bullshit jobs’. It is claimed that LLMs can automate mundane tasks and mind-numbing roles that make up these ‘bullshit jobs’. AI tools can be used to mimic the performative layer of white-collar office work. Drafting emails. Creating status updates. Rewording reports. Polishing presentations. Transcribing and summarizing meetings that arguably didn’t need to happen in the first place. Sam Altman floated the idea that the work that might imminently be transformed or eliminated by AI, isn’t “real work.”

Once these unfulfilling bullshit office jobs are eliminated, the remaining workers will be ‘empowered’ to focus on meaningful and creative work.

Teapot Alert #6: No, AI will create a lot of bullshit jobs

The use of AI will create new jobs, because the AI tools have to be monitored, supervised, chaperoned and curated, as the AI tools generate mission-critical errors, synthetic word slop, science slop, legal slop, medical slop, marketing slop, social media slop, new cybersecurity risks, brittle code and mounting technical debt that could kill businesses and institutions if it concerns mission-critical activities. Accordingly, the adoption of AI will create a layer of prompt engineers and supervisory managers, overseeing ‘AI co-workers’, in novel bullshit jobs, correcting slop, maintaining code, protecting the integrity of digital systems, identifying ‘hallucinations’ and fake news, and, more in general, combating the ‘enshittification’ of the workplace, the internet and society.

Firms will also install AI-ethics committees to ‘AI-wash’ or ‘baby-sit’ whatever models are deployed, and new middle-management roles will emerge to explain AI and AI outputs to gullible, ignorant executives who do not understand them. There will be ‘flunky’ committees and task forces for the Alignment of AI strategy, Senior Directors for Prompt Integrity and Vice-Presidents for AI Cybersecurity — and legal departments and legal roles will grow exponentially, because firms will do the maximum to escape the liability for all the errors and damages that their AI tools will produce. The result will be not fewer jobs, but different jobs — all ‘overhead’ jobs, the primary function of which is not production, but insurance and reassurance: “reassurance that the hierarchy remains intact, that human beings remain ‘in the loop’, and that someone, somewhere, is still nominally responsible”.

On top of all this, AI-driven Taylorism will further create overhead-jobs in employee surveillance. After all, the ‘iron law’ of surveillance technology is that if it can be used, it will be used. With many more means of monitoring, employers are tracking PC screens, logging keystrokes, analyzing internal communications, and even scanning employees’ faces for signs of fatigue. AI-powered systems monitor the tone of internal communications and track the frequency of messages and log application use across digital platforms. Task completion, web browsing, and response times are filtered through algorithms to determine individual worker productivity scores, which are used to decide on promotions and (bonus) pay. AI tools are employed to conduct automated background checks (of employees and job applicants) that scrape, with utter disregard for privacy and human decency, public records, financial data, and social media activity to assess ‘risk’ for the employer. The result is a dystopian workplace without privacy and trust. The permanent monitoring by ‘goon’ workers creates a chilling effect and undermines productivity, particularly in collaborative and creative environments where psychological safety, trust and freedom are key to innovation.

Claim #7: Anthropic’s Mythos is too dangerous to release publicly

Mythos is a new AI model from Anthropic that is claimed to be so powerful that it found thousands of vulnerabilities in existing operating systems and web browsers. Unexpectedly, Mythos Preview also developed a moderately sophisticated multi-step exploit to gain access to the Internet, which it was coded not to be able to do. Mythos is claimed to be the “best-aligned” AI model by a significant margin, with a level of coding capability where it “can surpass all but the most skilled humans at finding and exploiting software vulnerabilities.” Positioning itself as the world’s only responsible-AI company, prioritizing accountable AI-stewardship, Anthropic decided against a public release of Mythos to the general public, which would dangerously threaten cybersecurity across the internet. This ethical position has a price, however. Anthropic decided to launch cybersecurity defense Project Glasswing that gives the Mythos model exclusively to high-paying clients including Amazon, Apple, Google, Microsoft, Nvidia, CrowdStrike, JPMorgan Chase, Cisco, Broadcom, Palo Alto Networks, and the Linux Foundation, in exchange for cash ($4 million in direct donations and $100 million in Claude usage credits).

Teapot Alert #7: It is not about Mythos; weak real-world cybersecurity systems urgently have to be strengthened

The arrival of Mythos model shows that real-world cybersecurity systems are weak and can be broken, and that average attackers can be effective using AI tools at scale. However, Anthropic is cleverly overselling the capabilities of Mythos. First, the successful hacking attempts by Mythos Preview targeted Firefox’s JavaScript shell, a stripped-down debugging environment that does not include the browser’s real-world security defenses. The actual Firefox browser has multiple layers of defense against malicious code; Anthropic focused on just one layer. To focus the examination, Mythos was given additional system prompts. All this indicates that there is a meaningful gap between the test and the real defenses that an attacker would actually face. Second, Mythos Preview found a now-patched bug to remotely crash OpenBSD, an open-source operating system used in critical infrastructure like firewalls. AISLE, an AI cybersecurity startup, tested eight cheap, small, open-weight LLMs to see if they could find the 27-year old bug. Every single model tested found it, including one that costs $0.11 per million tokens — a fraction of Anthropic’s token cost. On a basic security reasoning task, cheap small open-weight models outperform most expensive frontier models from every major lab, including Anthropic. The key to effective AI cybersecurity capabilities is the system for finding vulnerabilities, not the size of the AI model.

Claim #8: We have to move the needle toward ‘pro-worker’ AI

MIT economists Daron Acemoglu, David Autor, and Simon Johnson (2026) wish this to happen. The MIT trio distinguishes between ‘bad’ and ‘good’ (pro-worker) AI and wants government (Trump?) to push AI policy and regulation in an unambiguously ‘pro-worker’ direction, promoting technologies that make human skills and expertise more valuable by creating new tasks. To achieve this, they propose a set of neoliberal policy interventions — including building AI expertise in government, encouraging competition, giving workers a ‘voice’ (in what exactly?), tax reform, preventing expertise theft, and getting rid of occupational licensing. They do not propose deeper reforms of shareholder capitalism and finance.

Teapot Alert #8: commercial AI will not be pro-worker, sorry

Calls for ‘pro-worker’ AI ignore key inconvenient truths: the inherent drive for automation and worker exploitation and control that is embedded in (shareholder) capitalism, the historical collapse of labor unions, the rise of electronic Taylorism, the regulatory capture of government by the Big Tech firms, and pressures from global competition to cut labor costs. Easy calls for ‘pro-worker’ AI do not engage with actual reality in which Jeff Bezos is weaponizing Amazon’s algorithmic surveillance tools to prevent workers from organizing, crushing pro-union movements inside his warehouses; Elon Musk demands that his employees work long overtime hours without pay or sleep, while he himself hangs around and tweets; Sam Altman prioritizes ‘growth’ over ‘safety concerns’, while cozying up with rich dictators in the Gulf who are not known for their human-rights record; and Mark Zuckerberg adopts a hostile posture towards his own workers, cracks down on free speech within his corporation, stops worker involvement in decision-making, and abolishes all diversity initiatives.

Firms use AI as a threat to discipline workers or to lay them off, but with the purpose to rehire them back as gig workers or to find someone else in the supply chain who is doing that work instead. “Your boss wants to use surveillance data to cut your wages”, sums it up well. But this chilling illustration of AI-based surveillance wage discrimination (common in U.S. healthcare, customer services, logistics and retail), provided by Cory Doctorow, hammers home the point:

“algorithmic wage discrimination draw on external sources of data to set the price of your labor. That’s the situation for contract nurses, whose traditional brick-and-mortar staffing agencies have been replaced by nationwide apps that market themselves as “Uber for nursing.” These apps use commercial surveillance data from the unregulated data-broker sector to check on how much credit card debt a nurse is carrying and whether that debt is delinquent to set a wage: the more debt you have and the more dire your indebtedness is, the lower the wage you are offered.”

Carrying a credit-card balance, taking out a payday loan, or discussing one’s indebtedness on WhatsApp or Instagram can lead to lower wages. “AI is not going to take your job, but it will likely make your job shittier,” says Alex Hanna, the director of research of the Distributed AI Research Institute.

Sober up: AI sans ketamine

Current understanding of the economic impacts of AI is mistaken: AI will not lead to ‘superintelligence’, massive job destruction, unprecedented technological unemployment and a recession nor to gigantic (aggregate) gains in labor productivity and an unprecedented acceleration of technological progress and economic growth. The aggregate-level impacts of AI will be rather humdrum: some occupations will vanish because of automation; existing jobs will be reshaped by AI and new occupations, tasks and roles will come into being to proctor and curate the AI tools; many of these new jobs will be bullshit jobs; however, most occupations will remain unexposed; aggregate labor productivity growth may increase a bit (because AI tools augment labor), but at the same time costly collateral damages of AI will exponentially grow over time and lower productivity growth. The more AI slop will be generated, the more overhead labor is needed to clean up the mess — and the more likely it will be that we will not be able to see the AI age in the productivity statistics. Given all the above, the current AI over-investment cycle cannot be sustained.