The use of bibliometrics to identify trends may be fostered by the realization that recasting historical claims in charts and figures facilitates online dissemination and conversation with economists. The spread of text-mining and network analysis techniques to answer more complex questions on dissemination, dominance, fragmentation, and field dynamics will require a more active move toward systematic training and the establishment of scientific and publishing standards, as will the development of new databases. The use of econometric techniques to test causal claims on the history of the discipline is nowhere to be seen, presumably because historians of economics are not willing to engage in methodological debates on the status of their objects and of their scientific practices.

Last week, I attended a history of social sciences seminar in Paris. Two of the 5 papers presented exhibited quantitative analysis, network and cross-citation analysis respectively. Earlier this month, Matt Panhans and John Singleton made a hit on SSRN and on the econ blogosphere with a paper showing bibliometric evidence of the increased used of quasi-experimental techniques in economics. At that time, I was refereeing two papers making extensive use of text analysis. The History of Economic Society conference session I will chair and the one I will comment on next week include 50% and 75% papers with quantitative analysis, spanning the casual use of N-grams, clever keyword bibliometric analysis, and a more sophisticated analysis of reciprocal influences between economics and other sciences through cross-citation analysis. None of the authors of the above papers is over 40.

A quantitative turn?

To understand what I see is going on in my field, it is useful to compare the present situation with 1997. That year, Roger Backhouse, Roger Middleton and Keith Tribe did a thorough survey of quantitative history of economics, its limitations and prospects. They argued that quantitative work taking economic science as its object was primarily aimed at studying rankings (of departments, journals, individuals), discrimination among economists, and sociological characteristics of the profession (editorial practices, refereeing and publishing practice, co-authorship, opinions). Quantitative work was in limited currency among historians. It was used to answer questions on the professionalization of the discipline and the development of economic theory, to study departments as producers of economists and economics, to map the influence of individual economists on other economists or the relationships of economists to policy-making. The authors explained the value of quantitative work in history: focusing on the average economists rather than on exceptional individuals, which is important to understand professionalization, policy influence, and the difference between American and European economics; testing statistically the many generalization historians continuously make; pointing to puzzles that need explanation. The development of quantitative analysis was however plagued by several issues, they warned: it was mostly focused on American data, the immense amount of time needed to compile databases led to ad hoc “quick and dirty” analyses which cannot be built upon, to which could be added the lack of standard for collecting, analyzing and maintaining data and difficulty in understanding the potential of new data sources.

18 years later, what has changed? Rankings metrics has become a field of its own, quantitative analyses of economic literature have produced new historical insight on submissions, co-authorship, citation patterns, shifts in methods and sociological assessment of economists’ political bias and perceived superiority. In history of economics, the democratization of Web of Science, Jstor and EconLit databases and the development of ready-made visualization tools, such as Wordle or N-gram viewer has stimulated a limited number of bibliometric studies, although the genre may well be currently taking off. New network visualization and text mining techniques are also being implemented to study new questions, tough the use of econometric models to test historical claims is still non-existent My hypothesis that there is a substantial increase in the number of paper using quantitative techniques cannot be tested, since many of them are working papers, and/or in submission, and often not available publicly. My point is rather to show that each of the various strands of quantitative history face different challenges.

Descriptive quantitative analysis: disseminating history of economics?

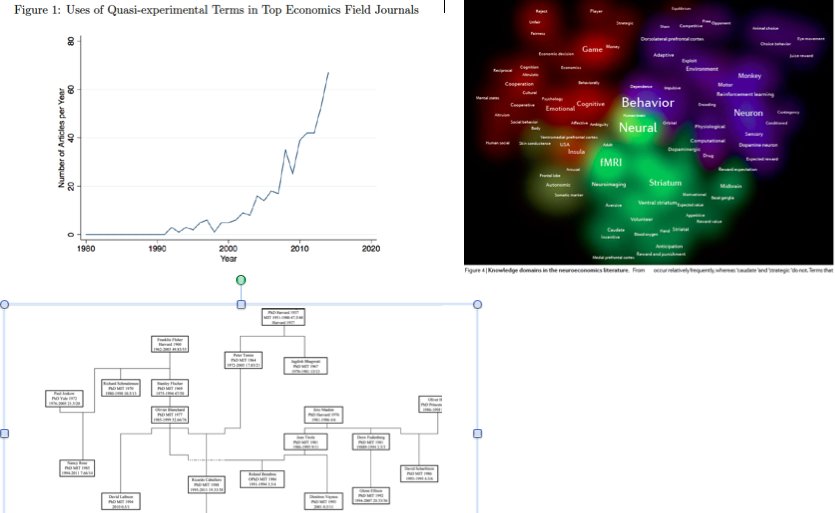

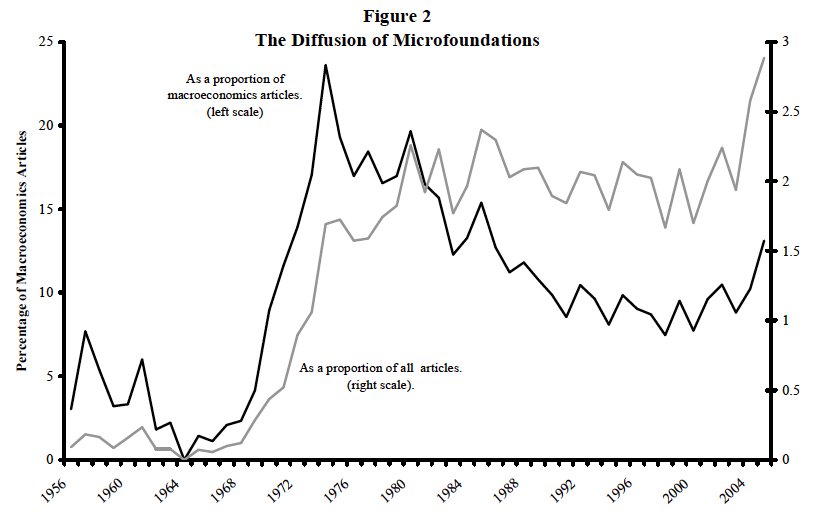

The type of bibliometric and descriptive methods surveyed by Backhouse and alii are still in limited currency. These are used to count objects ( publications on a given subject, faculty, PhD placement) and identify or document and represent trends (the shift toward quasi-experimental techniques, the spread of microfoundations in macroeconomics, the institutionalization of econophysics). There are especially relevant to investigate the dissemination of some economists’ ideas, including Koopmans or Bachelier.

Rise of quasi-experimental techniques, Panhans &Singleton (2015);

Diffusion of micro foundations : Hoover (2012)

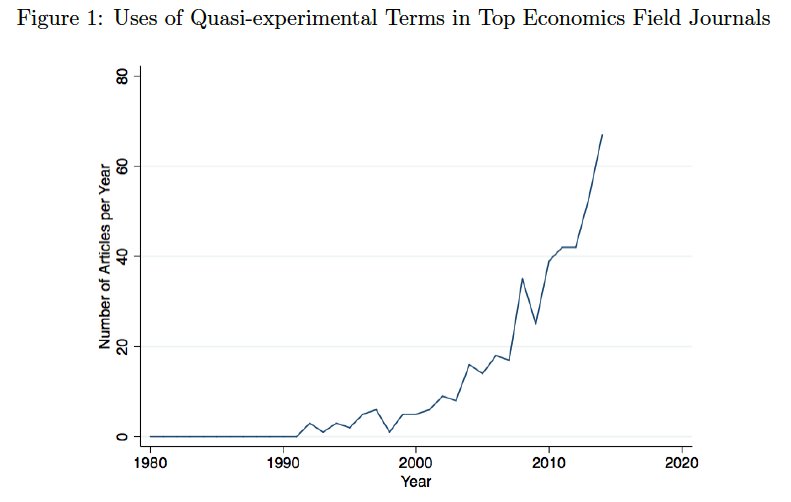

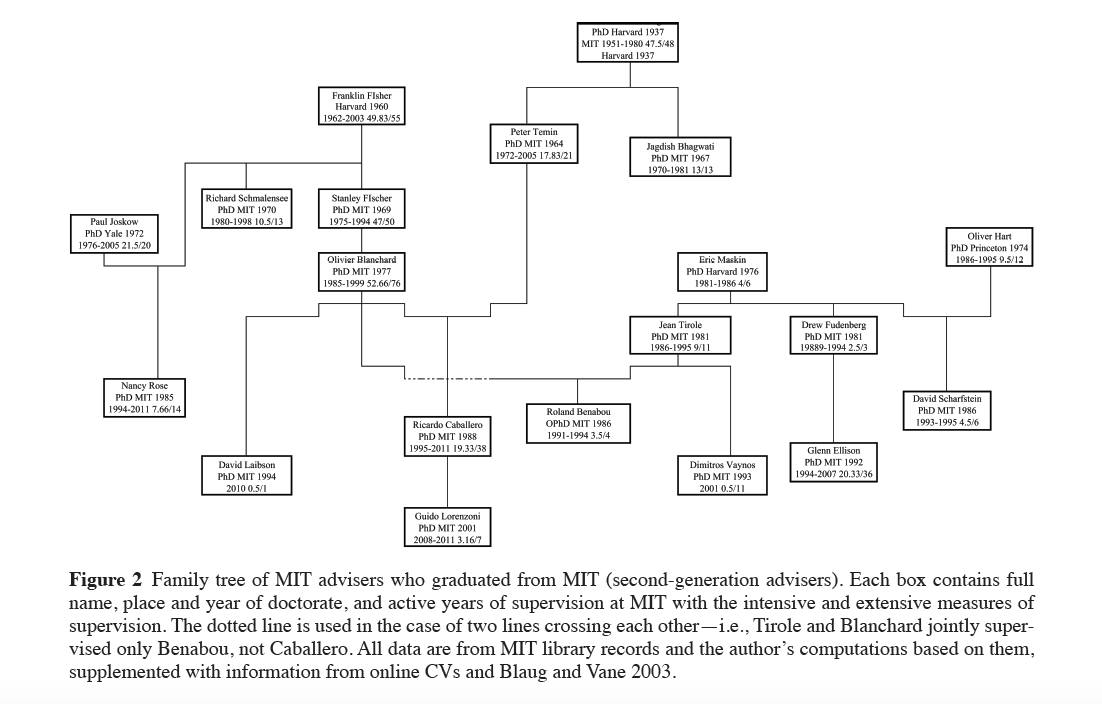

Family Tree of MIT, Svorenčík (2014) [older version, not gated]

Since there is no standard to report on the dataset used and the methodological choices (almost no paper comes with a technical appendix), these papers are sometimes difficult to discuss. Greater attention should be given how papers from a chosen sample are categorized, for instance. The content and organization of the JEL codes underwent dramatic changes since the 1940s, as has the meaning of “theoretical,” “empirical,” “applied work,” “mathematization,” “behavioral economics,” or “experiments.” The use of such categories to study decade long transformations therefore assumes a stability that wasn’t just there. Likewise, further qualitative analysis of economists’ publishing and citation patterns across time, countries, specialties, and institutions would help settle endless controversies on the relevance of key assumptions in bibliometric work.

A more systematic tendency to support broad historical claims with descriptive stats is discernible, but it is unclear whether the appeal of such techniques derives from the analysis, the visualization, or the publicization they allow. The recent success of quantitative historical work has pointed to the dissemination power of statistical evidence, charts, figures and networks. Encapsulating a key trend in a visual help gather attention on blogs and twitter, and recast historical claims is a language more congenial for economists. Those historians who have not given up on attracting economists’ attention may thus use quantitative techniques as dissemination tools more systematically.

New data sets and new tools to describe economists and their work: organizing training, setting standards for quantitative history

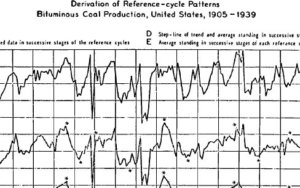

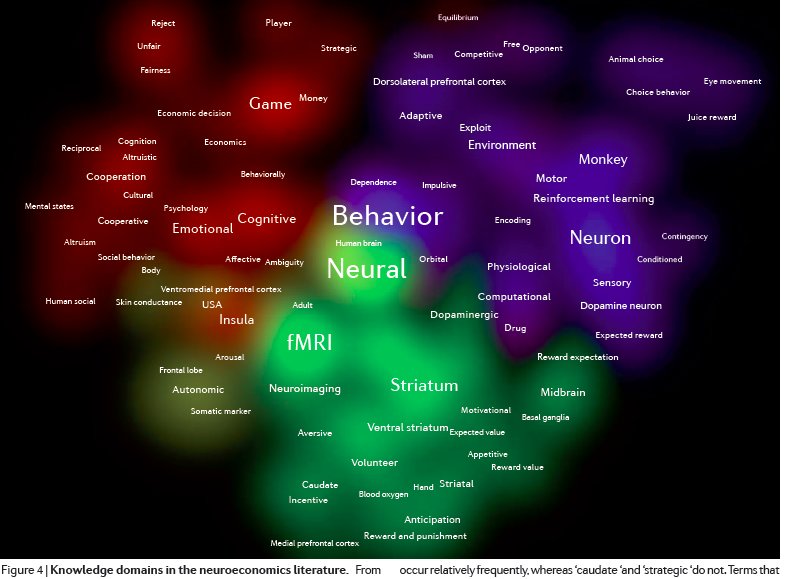

The significant number of quantitative history working paper that had been produced over the past couple of years is yet seeking to address more complex questions on economists’ geneaology, the changing structure of the economic discipline, reciprocal influence between economics and other science, impact of contexts on economists’ subject matters, or the relationship of theory to applied work. Some of them use more sophisticated content analysis, bibliometric coupling and automated community detection of the kind Yves Gingras, for instance, has developed at the CIRST to study the structure of physics. Other produce more sophisticated visualizations through network analysis, some that enables the exploration of the Chicago workshop system and its unification or fragmentation force within the department, the integration of social and neural sciences into neuroeconomics, and the collaboration pattern among scientist at the Center for Advanced Studies in the Behavioral Sciences in the 1950s. The possibilities offered by multivariate analysis, such as cluster or factorial analysis, to study scientific competition and credit and for instance, and topic modeling, are only beginning to be investigated.

Knowledge domain in the neuroeonomics literature, in Levallois et alii (2012)

It is this set of techniques aimed at visualizing historical data and uncovering robust patterns in them that may yield most fruitful insights on the transformation of economics. Yet, the more complex the techniques, the more numerous and tricky issues of quantification, algorithm choice, threshold and visualization are. Yet the profession doesn’t merely need scientific standards so that such techniques can spread – some which may be borrowed from history, history of science, sociology of science, etc. Publishing, replication, and data open access standards are also needed, and it might be into med science, biology or political science rather than into economics that history journal editors may look for good practices. But the crucial element in the support of quantitative history is appropriate training. The mathematization and formalization of economics, as well as the cliometric turn in economic history, Claudia Goldin reveals, exemplify the tendency of tool shocks to pit one generation against the other and to marginalize a great number of scholars. No one wants to be the next Rupert McLaurin, or Griffith C. Evans. Setting up training programs, for instance within the many summer schools flourishing in history of economics, therefore seems appropriate, and the requirement that one quantitative essay be written in each history of economic dissertation is something worth discussing.

Another trend in history is the compiling of new databases. Too systematic a reliance on Jstor/EconLit/WoS narrows the kind of questions addressed, so that more data on PhDs, career trajectories, grant applications, collaborations, and so forth, are needed. These would enable the kind of prosopographic analysis of successive cohorts of MIT economists already published, or the leadership hierarchy in the American Economic Association. Impediments to the development of new data sets were extensively discussed by Backhouse, Middleton and Tribe, and little has changed since.

Testing historical claims, identifying relations: harnessing epistemological debates

In a recent survey, Guillaume Daudin documents three uses of quantitative methods in economic history: counting thigs, exploring data, and establishing links (modeling hypotheses and testing them), which was the thrust of the cliometric revolution. In history of economics, there is no such move toward the use of quantitative techniques to model and test historical claims is yet discernible (one notable exception is Biddle’s 1998 study of the marginalization of institutional economics estimates a “differential fertility” model to explain the long-term decline of Wisconsin institutionalism). This may come as a surprise, since most historians of economics are still trained as economic undergraduate, sometimes graduates. Econometric theory and package mastery is therefore no impediment to its application to history. The problem lies elsewhere: testing causal claims (for instance, on the influence of emigration on mathematization, on the reasons for the marginalization/dissemination of an approach or a tool, on economists’ influence on policy debates) requires an epistemological leap that few historians may be willing to make. Every argument in favor of quantitative techniques -clarity, concision, standardization of scientific proof, unanticipated conclusions - or against it -oversimplification, lack of relevance, of realism; unquantifiable objects, undue standardization, esoterism- has a familiar ring. These were the arguments Samuelson, Bowen, Madow, Arrow, Boulding, Knight and Machlup waged during AEA debates on the mathematization of the discipline in the 1940s. These were the claims Fogel, Kuznets, Rostow, Fishlow, Temin, Chandler, Hacker put forward during the railroads debate in the 1960s (see ch7). These are the ideas discussed by the contemporary Peretroika movement, whose aim is to challenge the dominance and quantitative methods in political science. And, to some extent, the threads underlying recurring debates on the state of macroeconomics and economic education.

Historians of economics’s engagement with –or rebuttal of- quantitative techniques is underwritten by collective epistemological and practical debates. What kind of questions we want to answer with these techniques, which techniques are more appropriate to deal with history claims, what is a healthy balance between statistical and qualitative evidence are important questions. These are subject we should extensively discuss during conference sessions and roundtables. If we don’t, our ability to frame our “quantitative turn” may be confiscated by imperialistic outsiders.